AI Reset: Back to the big picture

Amidst predictions of market corrections or a burst bubble in AI and related markets, it's a good time to correct our AI thinking.

There are many things to lament about contemporary AI discourse. My feeds and human interactions are clogged with hysterical predictions, fawning over unremarkable outputs, attempts to inflict FOMO on innocent bystanders, and denunciations of critical thinking as heresy. But what bugs me most, and potentially the most harmful thing, is the way in which artificial intelligence, a seventy year old field rich in theory, possibilities, and practices, has been reduced to the generative AI boom initiated by chatGPT in November 2022.

With no exaggeration, I can say that 95% of my unmanaged media, work interactions, and social conversations about AI center on genAI. On the internet, discourse follows money, and all the money - for investment, PR, and advertisements - swirls around genAI. As a result, we’re developing a blind spot to all of the other forms of AI that have the potential to do even more amazing things than create crap Super Bowl ads.

In the last couple of months, sober, non-AI-partisan voices have been debating the economic impact of all these resources being directed to genAI. The economic realities of hundreds of billions of dollars flowing into companies without business models and data centers without customers has engendered serious conversations about bubbles bursting and even crashes. At the very least, it seems everyone is agreed that there will be a series of closings, consolidations, and revaluations, or as they’re politely known, market corrections.

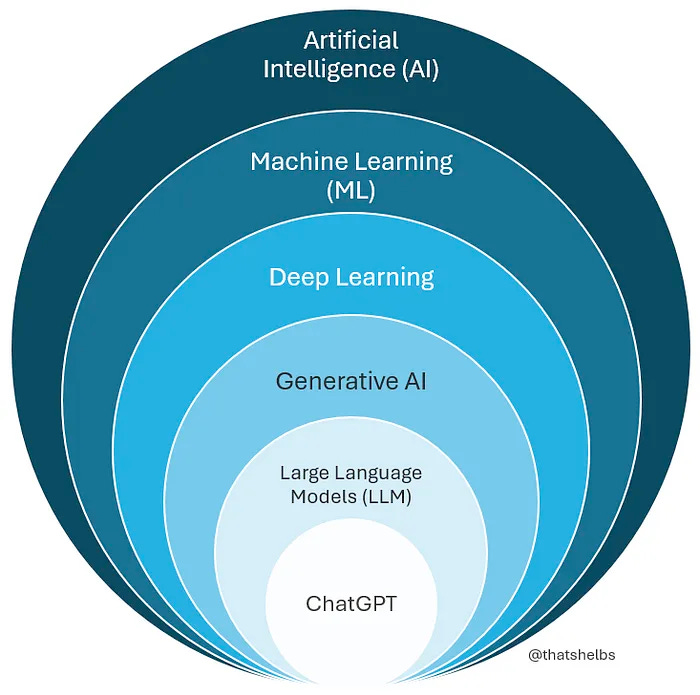

So now might be a good time to do a cognitive correction around AI and re-open our collective minds to the possibilities of the broader field of AI. Last fall, I enrolled in an MIT executive education program in AI, machine learning, and data science. The class is pretty intensive - 300+ hours of theory, math, and coding1 - and involves a lot of reading and video viewing across the internet2. In every overview of AI I’ve seen, I encounter charts like this:

Do an image search for “diagram of AI landscape” and you’ll find endless variations on that chart:

Be careful to type “diagram of AI landscape”, though, as “AI landscape” will get you a lot of cheap AI-generated SciFi cover art:

The first chart, and all of the others you get through search, effectively highlights a critical fact we seem to be losing sight of: chatGPT and LLMs are just one subset of AI overall. These charts show that there are a variety of other AI disciplines that can be used independently of genAI to create insight and value.

“There’s a lot of AI, most are not language models, they all make progress at their own pace, breakthroughs are unevenly distributed, and none of them are on some trajectory where they’re about to be dangerous”

- Cal Newport, Deep Question Podcast, Episode 380 “ChatGPT is not Alive”

I’ve died on too many hills arguing about words to care about a perfect definition of AI, but these distinctions matter. To my mind, the most exciting things happening in AI are not the note takers, summarizers, embedded assistants, email tweakers, or action figures. I’m more excited by the protein folding, drug research, and advanced data analytics offered by machine learning.

Reducing AI to generative AI causes us to miss:

The value that can be gained from machine learning in all its flavors - supervised, unsupervised, deep learning, etc.

The accessibility of other AI technologies which are much cheaper to use.

The ability to tailor AI to your domain or business and build real competitive advantage.

Below are some of the things that machine learning has provided for us:

Computer vision - recognizing and classifying various types of images from letters and numbers to objects in photos

Medical Imaging - a complex, specialized subcategory of computer vision, this includes trained models that can differentiate between tumors and healthy tissue, spot and highlight patterns the human eye might miss, and predict/identify diseases from weak but real signals that evade our senses.

Autonomous Vehicles and hardware - cars on the road, assemblers in warehouses, robotics in various settings are all a complex mix of machine learning

Language translations

Voice to Text / Voice Recognition

Satellite imaging - for terrestrial meteorology or intergalactic object discovery

Biological Structure Prediction - studying how protein and molecule structures interact, impact disease states, and help create treatments

Pattern Detection and Classification - taking vast datasets that our Savanna brains can’t process to find useful patterns or groupings. The classic cases are market segments and fraud detection, but machine learning can find patterns, groups, or classes in any kind of large dataset. Other areas include customer churn, IoT data around supply chains, cybersecurity threat detection, and more.

Some of these things are old news, old enough, in fact, to be boring.3 But while genAI hypesters are telling us what might be, the list above tells us what has already happened and will continue to - if we invest. By the way, these things were all achieved at a fraction of the cost of Meta’s Llama 3 model, estimated to have cost ~$500 million.

Up top, I suggested that exclusive focus on genAI at the expense of the rest of the field was potentially dangerous. I wasn’t referring to the environmental, financial, or social justice concerns around genAI. They are real, but I was focusing on the opportunity cost. Right now, AI investing looks like an all-in bet on pocket eights before the flop - an excessive bet on an appealing but dangerously weak hand.4 On a broader societal level, there is a danger that all of the investment money and government or NGO funding will get sucked up by LLMs. Remember, Sam Altman has already suggested that we need to build a Dyson Sphere, in addition to cramming hundreds of data centers into the arctic circle all while we still haven’t seen anything as valuable as rapid, accurate, early detection of medical problems or any of the other ML achievements listed above. In a year or two, we may see trillions of dollars going into generative AI because it might cure cancer (don’t ask how, though!), but there are much cheaper techniques available to us that have already yielded results and will continue to do so, if we can get out of the hype cycle.

For those who are interested, the coding was in Python and related ML libraries (e.g. Keras, SciKit, PyTorch, etc.). The math we worked with directly included probability, statistics, and linear algebra, with some background in calculus referenced for underylying concepts. The field really is kind of cool.

I’m compiling resources along the way - a la The Paper Chase - and will share at some point.

There have also been cases where ML has created or recreated patterns that have led to harm - profiling, surveillance, biased loan approval systems - by reinforcing social prejudices built into the source dataset. I footnote it not to dismiss its importance, but because I think those problems could have been prevented through better training of the predictive model, higher levels of oversight, or choosing not to use them.

Can’t help but make a poker reference when it’s apt, and this is really apt. A pair of eights in the hole is a classic weak hand that players get attached to. All excited by holding 8-8 before you see any cards seems great - “I have a pair!” But, half of the pairs other players may have are better than your eights, and only an eight can strengthen your hand. For further reference, check out the movie Rounders.

![code][D by Kip Voytek](https://substackcdn.com/image/fetch/$s_!xyan!,w_40,h_40,c_fill,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fe6216fdb-c905-4b30-9c37-3d371d92deb5_1280x1280.png)

Yes, to more precision in describing the myriad flavors and forms of AI. I've found this taxonomy to be very useful for elevating the discourse with clients (and occasionally with friends and family, though they usually find me tedious): https://dropleaf.app/d/AlXez8scbd

Aside from the unfortunate reference to "Rounders," this was very interesting. I was already trying to avoid saying "AI" when I really meant "LLM"--you've confirmed that position with some actual knowledge.